E-Science: Massive experiments, global networks

Loading...

Elizabeth City State University in North Carolina is not the first name that pops up in conversations about centers of polar science.

Tucked at the tip of a branch of Albemarle Sound, along the state’s northeast coast, the well-regarded, historically African-American university focuses largely on undergraduate education. But it’s also taking part in cutting-edge Arctic and Antarctic science as a key player in PolarGrid – a powerful, sophisticated computer network researchers use to analyze images of ice sheets in Greenland and Antarctica and model their behavior.

It’s part of the burgeoning world of e-Science – a realm where the questions are big, cutting across once-disparate disciplines. And the answers often demand enormous amounts of number crunching through networks of interconnected computing centers at universities and laboratories around the world – a process known as grid computing.

E-Science and its computing networks – with their ability to link scientists at schools large and small to high-powered computers, large repositories of data, and the sophisticated tools to analyze information and display the results – are “leveling the playing field of opportunity” in science, says Gerry McCartney, vice president for information technology and the chief information officer at Purdue University in West Lafayette, Ind.

That’s certainly the expectation with PolarGrid, notes Linda Bailey Hayden, who heads Elizabeth City State’s Center of Excellence for Remote Sensing Education and Science. She is one of the lead researchers in the PolarGrid team. The group, led by University of Indiana computer scientist Geoffrey Fox, also includes researchers from the University of Kansas, Ohio State University, and Pennsylvania State University.

A key PolarGrid objective is to provide scientists in the field with “real-time” access to the wider grid network so they can review ice-sheet images, model ice-sheet activity, and use the information to adjust their field experiments to changing conditions while the researchers are still on the ice.

Building on PolarGrid as an educational tool, Dr. Hayden says, she and her colleagues are proposing that the university offer a master’s degree in computer science and a PhD in environmental remote sensing, broadening access and exposure to top-flight science for what the team has termed “traditionally underserved groups.”

On a global scale, one of the leading examples for e-Science’s potential to draw on the talents of scientists outside the usual cast of major research institutions is the European Organization for Nuclear Research, known by its French acronym, CERN.

Last week, CERN formally launched the grid network it will use to analyze the data from the laboratory’s Large Hadron Collider, an enormous particle accelerator expected to begin full operation next year. The collider is expected to generate 15 million gigabytes of data each year – roughly the capacity of 125,000 iPods.

Although access to the data is restricted to physicists in countries collaborating on the collider, the experiment’s global network taps the number-crunching ability of more than 140 computer centers in 33 countries. Much of the grid is designed to distribute the data for analysis locally, notes Ken Bloom, a physicist at the University of Nebraska and a member of one of CERN’s collision-detector teams.

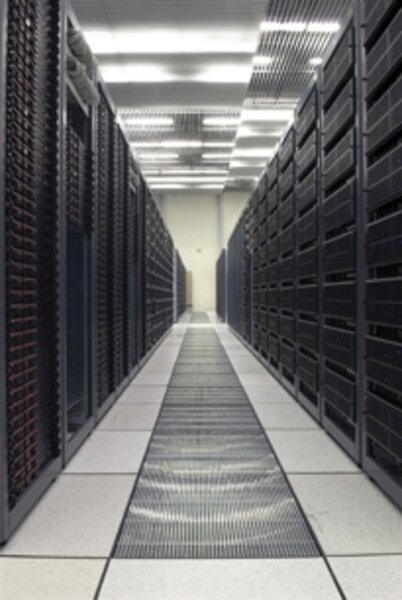

“In the good old days, you’d have one biggish computer at a lab doing all the data processing,” he says. But the volume of data expected to pour from the collider’s detectors is so large that a single-center arrangement wouldn’t work – the power and cooling requirements to keep the computers happy would be enormous. As a result, the data center at CERN makes only a first pass at any processing, then it archives the data and sends subsets out to a second layer of computing centers. Physicists enter the picture only after the information is further subdivided and sent speeding across the Internet to two more layers of computer centers – the last of which are at individual universities.

It’s there that the discoveries will emerge in countries ranging from Japan, the US, and European nations to Pakistan, Turkey, and Brazil.

“We’re doing what we can to level the playing field for people who are collaborators,” giving them equal access to the data, Dr. Bloom says.

By contrast, the US’s TeraGrid gives any university researcher in the country access to an enormous amount of processing power – at no cost other than the time it takes to fill out an application for computing time. The grid’s workhorse computers are housed at nine sites across the country and are being used for everything from modeling severe weather to economic scenarios, notes John Towns, director of persistent infrastructure at the National Center for Supercomputing Applications at the University of Illinois at Urbana-Champaign.

Ironically, he says, despite the network’s ability to help drive science and broaden access to high-powered computing, “many people don’t know of the TeraGrid’s existence.”

If they did, it still might be the wrong tool, observes Gerhard Klimeck, a professor of electrical and computer engineering at Purdue University and an assistant director for the Network for Computational Nanotechnology. Large networks like TeraGrid are well suited to high-octane computational tasks, he says. But in fields like nanotechnology, a lot of work is done at the lab bench, where researchers with data and a question want an answer in minutes – not the hours it takes to fill out and process applications.

To fill this need, nanoHub was born. “Our goal was to put nanotechnology simulation tools in the hands of people who otherwise wouldn’t touch them with a 10-foot pole,” Dr. Klimeck says. It provides access to sufficiently sophisticated computing power and programs to serve the “I need the answer yesterday” crowd.

NanoHub began in 2002, has six universities as its core, and has grown from roughly 1,000 users to some 77,000 a year, Klimeck says. Perhaps as important is the growing number of research papers that credit nanoHub for providing computational tools. NanoHub has since spun off clones at Purdue. Because it’s readily available to anyone who signs up for an account (no formal application for computer time necessary), nanoHub is also used for engineering education, he says.

“E-science is rapidly changing,” notes Indiana’s Dr. Fox. And with it comes the potential for an increasingly level playing field among researchers in the US and overseas, he says.