In robotics development, hand identifies objects

Loading...

Robots could one day have the ability to assume most, if not all, of the predictable tasks currently done by humans, but only if robots can develop hands similar to those of humans, capable of acute recognition and detection. With this goal in mind, researchers at the Massachusetts Institute of Technology (MIT) recently revealed a robot hand, or gripper, capable of determining what objects it grasps.

Daniela Rus, director of the Distributed Robotics Lab at MIT, along with graduate students Bianca Homberg, Robert Katzschmann, and Mehmet Dogar, developed a “soft” robotic hand made from silicone that can be attached to existing robots, such as the Baxter manufacturing robot the team used for testing.

As opposed to “hard” hands, or grippers made from metal, the “soft hand’s compliance allows it to pick up objects that a rigid hand is not easily capable of without extensive manipulation planning,” wrote the researchers in their associated paper. “Through experiments we show that our hand is more successful compared to a rigid hand, especially when manipulating delicate objects that are easily squashed and when grasping an object that requires contacting the static environment.”

This, in itself, isn’t new. Soft materials, like silicone, have been previously used for grippers due to their superiority over hard materials, particularly in the food-service industry where robotic hands are used to package fruits and vegetables that can easily be damaged or crushed.

Soft Robotics Inc., for example, builds robotic grippers for use in “warehousing, manufacturing, and food-processing environments where the uncertainty and variety in the weight, size, and shape of products being handled has prevented automated solutions from working in the past.”

But these grippers are programmed for particular objects and are not capable of distinguishing between objects.

“Robots are often limited in what they can do because of how hard it is to interact with objects of different sizes and materials,” Ms. Rus explained in a press release. “Grasping is an important step in being able to do useful tasks; with this work we set out to develop both the soft hands and the supporting control and planning systems that make dynamic grasping possible."

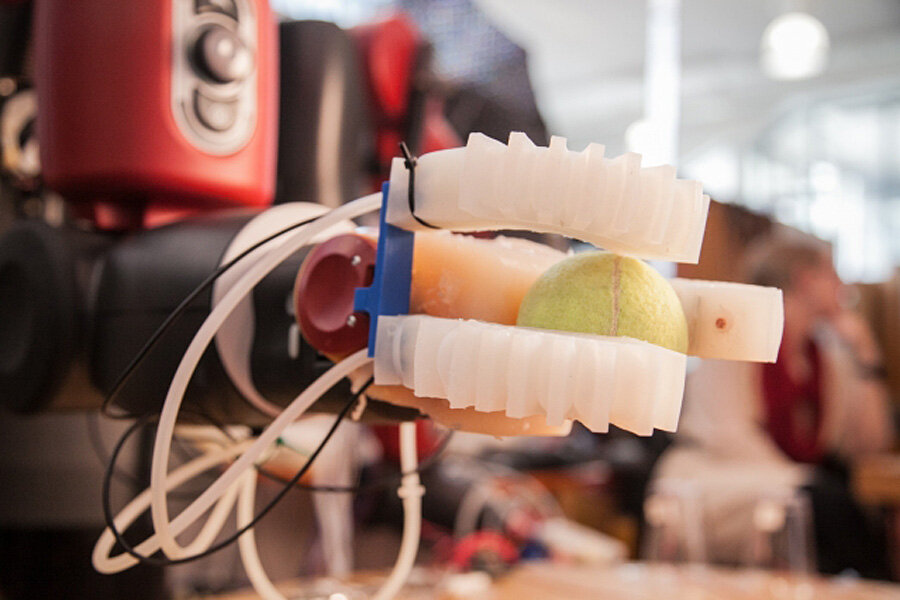

MIT’s gripper has three “fingers”, each of which have an embedded sensor and “channels in it that fill with air and allow the hand to wrap itself around an object,” from a thin sheet of paper, to a tennis ball, to a roll of duct tape, reported CNBC. “On-board sensors enable the robot to identify the object it's grasping.”

“When the gripper hones in an object, the fingers send back location data based on their curvature. Using this data, the robot can pick up an unknown object and compare it to the existing clusters of data points that represent past objects,” explains MIT. “With just three data points from a single grasp, the robot’s algorithms can distinguish between objects as similar in size as a cup and a lemonade bottle.”

For now though, the robot isn’t capable of learning new objects by itself. “Engineers have to train the hand to recognize each object it's picking up. They make the robot pick up a new object 10 times and then encode that training information in the robot's software,” CNBC reported.

“If we want robots in human-centered environments, they need to be more adaptive and able to interact with objects whose shape and placement are not precisely known,” Rus says. “Our dream is to develop a robot that, like a human, can approach an unknown object, big or small, determine its approximate shape and size, and figure out how to interface with it in one seamless motion.”

The team is presenting their research this week in Hamburg, Germany at the International Conference on Intelligent Robots and Systems.

Also presenting at the conference are researchers from Carnegie Mellon, who developed a similar robotic hand. However, this team’s hand has 14 embedded strain sensors in each of the three fingers which allow the robot “to determine where its fingertips are in contact and to detect forces of less than a tenth of a newton,” according to a press release.

“Hands are, in some ways, the frontier of robotics – and giving them fingers that can ‘think’ on their own is a big step towards making them more like our own,” wrote Gizmodo.