Microsoft says its software can tell if you're going back to prison

Loading...

In a scenario that seems ripped straight from science fiction, Microsoft says its machine learning software can help law enforcement agencies predict whether an inmate is likely to commit another crime by analyzing his or her prison record.

In a series of videos and events at policing conferences, such as one on Oct. 6 at the Massachusetts Institute of Technology, Microsoft has been quietly marketing its software and cloud computing storage to law enforcement agencies.

It says the software could have several uses, such as allowing departments across the country to analyze social media postings and map them in order to develop a profile for a crime suspect. The push also includes partnerships with law enforcement technology companies, including Taser – the stun gun maker – to provide police with cloud storage for body camera footage that is compliant with federal standards for law enforcement data.

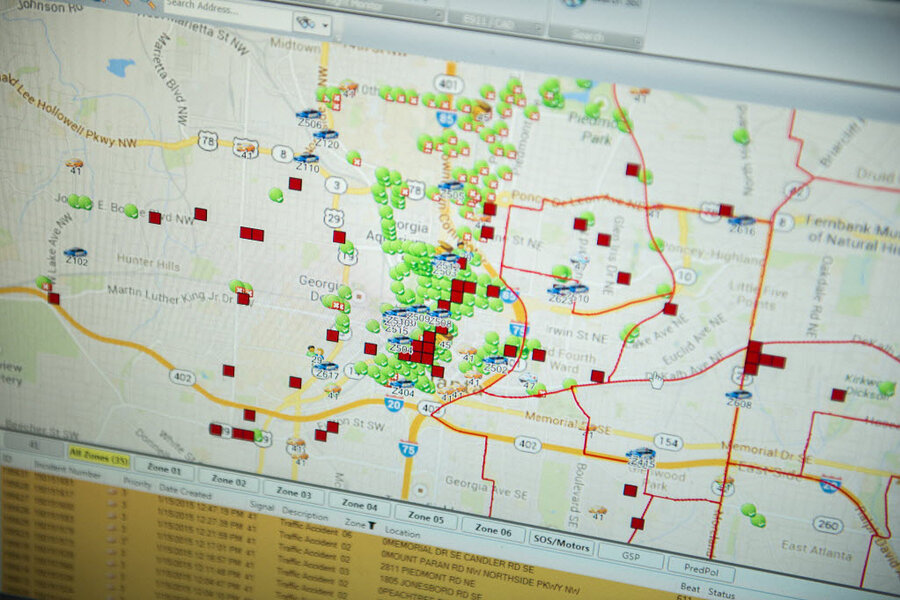

But in a more visionary – or possibly dystopian – approach, the company is also expanding into a growing market for what is often called predictive policing, using data to pinpoint people most likely to be at risk of being involved in future crimes.

Predictive or preventative?

These techniques aren’t really new. A predictive approach — preventing crime by understanding who is involved and recognizing patterns in how crimes are committed — builds on efforts dating back to the early 1990s, when the New York City police began using maps and statistics to track areas where crimes occurred most frequently.

“Predictive policing, I think, is kind of a catch-all for using data analysis to estimate what will happen in the future with regard to crime and policing,” says Carter Price, a mathematician at the RAND Corporation in Washington who has studied the technology. “There are some people who think it’s like the movie ‘Minority Report’ ” — in which an elite police unit can predict crimes and make arrests before they occur — “but it’s not. No amount of data is able to give us that type of detail.”

Scholars caution that while data analysis can provide patterns and details about some types of crimes – such as burglary or theft – when it comes to violent crime, such approaches can yield information for police about who is at high risk of violent victimization, not a list of potential offenders.

“Thinking that you do prediction around serious violent crime is empirically inaccurate, and leads to very serious justice issues. But saying, ‘This is a high risk place,’ lets you focus on offering social services,” says David Kennedy, a professor at John Jay College of Criminal Justice. In the 1990s, he pioneered an observation-driven approach that worked with local police in Boston to target violent crime. After identifying small groups of people in particular neighborhoods at high risk of either committing a crime or becoming a victim of violence, the program, Operation Ceasefire, engaged them in meetings with police and community members and presented them with a choice – either accept social services that were offered or face a harsh police response if they committed further crimes. It eventually resulted in a widespread drop in violent crime often referred to as the “Boston Miracle.”

The Operation Ceasefire program, Professor Kennedy says, was never meant to be predictive. But using data to discover patterns of how crimes are committed and map where they occur has been more successful, especially for lower-level crimes.

One approach

A few years ago, Cynthia Rudin, who teaches statistics and focuses on machine learning at the Massachusetts Institute of Technology, received an e-mail from a member of her department asking for help using data analysis to aid the Cambridge Police in catching burglaries.

The e-mail originally came from Lt. Daniel Wagner, the department’s lead crime analyst. After meeting with Lt. Wagner, she was surprised when he presented her with an academic paper suggesting one method for analyzing the crimes using a computer model, then said he thought it wouldn’t work.

“I read the paper, it was a really dense mathematical paper,” says Professor Rudin, an associate professor at MIT’s Sloan School of Management. When she came up with an alternate suggestion, Lt. Wagner was still skeptical. “I couldn’t believe this guy, so he shot my idea down, and I went back to the drawing board, and everybody liked that idea.”

Her eventual approach – using a machine learning tool to analyze the department’s existing records and analysis to determine if there were particular patterns in how burglaries were committed – proved successful, resulting in a long-running partnership.

The software, which Rudin and doctoral student Tong Wang called Series Finder, works by identifying patterns based on information such as when and where a crime occurred, how the burglar broke into a home, and whether the victim was at home or on vacation at the time.

“Luckily, the Cambridge Police Department had kept really good records, so the computer was learning from what analysts had already written down," she says. "It’s not like the computer was off on its own being artificially intelligent, it was supervised learning. When we ran Series Finder, it was able to correct mistakes in the database and it said ‘That isn’t right.’ ”

Series Finder supplements the work of human analysts, she says, by identifying crime patterns in minutes that might take an analyst hours of poring over records and databases.

“It would be nice if the computer automatically said, there’s a pattern here: there’s a pickpocket here every Tuesday and Thursday,” Rudin says. “How many crime series were not caught because those patterns weren’t detected? We’ll never know, because humans just aren’t that good at sorting through larger sets of data.”

A lucrative market

Professor Rudin was able to use grant funding to make her research possible, providing the Cambridge Police with access to Series Finder for free. The goal, she says, wasn’t to commercialize the software but to showcase the impact of machine learning to solve real-life crime problems.

But commercialization has been inevitable, as companies such as PredPol, which predicts crimes using software originally developed to forecast earthquakes, and Microsoft have expanded in a bid to attract more business from law enforcement agencies.

In one video tutorial for law enforcement agencies, Microsoft makes a sweeping claim. Using records pulled from a database of prison inmates and looking at factors such as whether an inmate is in a gang, his or her participation in prison rehabilitation programs, and how long such programs lasted, its software predicts whether an inmate is likely to commit another crime that ends in a prison sentence. Microsoft says its software is accurate 90 percent of the time.

“The software is not explicitly programmed but patterned after nature,” Jeff King, Microsoft’s principal solutions architect, who focuses on products for state and local governments, says in the video. “The desired outcome is that we’re able to predict the future based on that past experience, and if we don’t have that past experience, we’re able to take those variables and then classify them based on dissimilar attributes."

Rudin has also been working on using machine learning to predict recidivism, but she pitches her research differently.

“They don’t just give you probability, they give you reasons. You can use them for various decisions like bail or parole or social services,” she says. Ultimately, the goal isn’t profit, she adds, it’s developing tools that allow police to use data in ways that may help improve outcomes of the justice system.

Will predictive analysis help improve policing?

In its marketing materials, Microsoft has touted the impact of its cloud storage software, particularly on making police body cameras – long controversial for police departments – easier to use.

The Oakland Police Department, for example, has begun using Microsoft’s Azure cloud storage service for its body cameras. In Oakland, body cameras have reduced officers’ use of force by 60 percent since they were adopted in 2009, the department says.

The police credit the cameras with stopping officer-involved shootings for the last 18 months, though there have since been two shootings this summer, for which the department released its body camera video.

“Predictive policing will never replace traditional law enforcement, but it will certainly enhance the effectiveness of existing police forces, allowing for an informed and practical approach to crime prevention rather than merely making arrests after the fact,” wrote Kirk Arthur, Microsoft’s director of worldwide public safety and justice, in a blog post in January.

While predictive policing is still in the early stages, some say the data it generates could have a mixed impact. While the information could improve police transparency, it could also lead to other problems.

“If police departments had access to social media accounts, and it turned out that crimes were being committed by people who liked a certain kind of music and a certain sports team, it could lead to certain kinds of racial discrepancies,” says Dr. Price, the RAND researcher. “It’s a useful tool, but it should always be done with [the idea of] keeping in mind how this will impact populations differently, and just sort of being cognizant of that when policies are put in place."

But Kennedy, the criminology professor, says that for violent crimes, using data that shows crime risks to influence policing actions could have devastating consequences.

“People have been trying to predict violent crimes using risk factors for generations, and it’s never worked,” he says. “I think the inescapable truth is that, as good as the prediction about people may get, the false positives are going to swamp the actual positives ... and if we’re taking criminal action on a overwhelming pool of false positives, we’re going to be doing real injustice and real harm to real people.”