From Russia, with hashtags? How social bots dilute online speech

Loading...

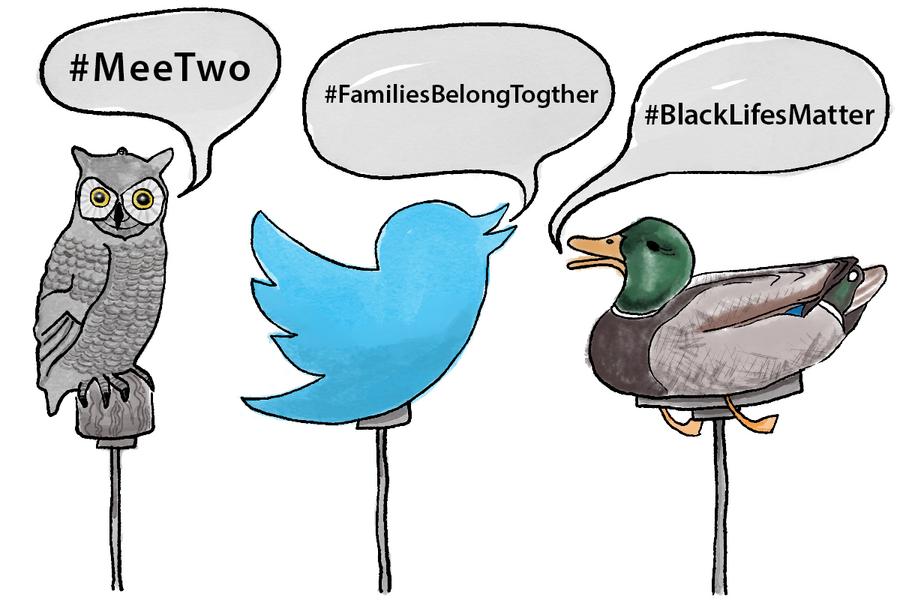

“On the Internet,” reads a well-known New Yorker cartoon published in 1993, “nobody knows you’re a dog.” While this notion of anonymity may seem quaint in the age of surveillance capitalism, it’s not quite so easy these days to tell humans from autonomous software agents, some of which are programmed to sabotage our political discourse. A group that monitors the top 600 pro-Kremlin Twitter accounts – many of which are automated accounts that post nearly 24 hours a day – found that a version of the hashtag #FamiliesBelongTogether with a subtle typo was the third most-tweeted hashtag on a weekend when thousands took to the streets to march against President Trump’s immigration policies. By diverting attention and propagating “noise” around the “signal” of actual online conversations, the bots apparently aimed to Balkanize the discourse to prevent it from gaining traction. “This is becoming more like a mind game,” says Onur Varol, a postdoctoral researcher at Northeastern University’s Network Science Institute.

Why We Wrote This

Hashtags have become the digital scaffolding around which social movements coalesce. The emergence of “decoy” hashtags threatens to dilute this newest method of activist organization.

If you search Twitter for the hashtag #FamiliesBelongTogether, a tag created by activists opposing the forcible separation of migrant children from their parents, you might be in for a pop proofreading quiz.

That’s because, in some locations in the United States, the top trending term, the one that Twitter automatically predicts as you type it, contains a small typo, like #FamiliesBelongTogther.

The misspelled hashtag, and others like it, have enjoyed unusual popularity on the social platform. Tweeters who have unwittingly posted versions include two senators and one congresswoman, the American Civil Liberties Union, MoveOn, and the actress Rose McGowan.

Why We Wrote This

Hashtags have become the digital scaffolding around which social movements coalesce. The emergence of “decoy” hashtags threatens to dilute this newest method of activist organization.

This is not an accident, say data scientists, but the result of a deliberate, automated misinformation campaign. The misspelled hashtags are decoys, aimed at diffusing the reach of the original by breaking the conversation into smaller groups. These decoys can dilute certain voices and distort public perception of beliefs and values.

“This is becoming more like a mind game,” says Onur Varol, a postdoctoral researcher at Northeastern University’s Network Science Institute. “If they can reach a good amount of activity, they are changing the conversation from one hashtag to another.”

In the past decade, hashtags have played a central role in grassroots political communication. Many of the movements that have shifted public perceptions of how society operates – the Arab Spring, Occupy Wall Street, Black Lives Matter, MeToo – gained early momentum via Twitter hashtags.

The #FamiliesBelongTogether decoys were likely propagated by automated accounts linked to Russia. As social media consultant Tim Chambers pointed out earlier this month, the Hamilton 68 dashboard, a tool that monitors the top 600 pro-Kremlin Twitter accounts, found that the decoy hashtag #FamilesBelongTogether was the third most-tweeted hashtag on June 30 and July 1, the weekend that thousands took to the streets to march against the president’s immigration policies.

By continually posting misspelled versions of the hashtag 24 hours a day, these automated accounts train Twitter’s search algorithms to see the misspelled versions as trending topics. The aim may be to create something of a spoiler effect, fragmenting the movement into subpopulations, each too small to gain traction.

By themselves, the decoy hashtags will do little to divide immigration activists, says Kris Shaffer, a researcher at New Knowledge, a cybersecurity firm that tracks how disinformation spreads online. “Sometimes what they’re doing is essentially digital reconnaissance,” he says, “like trying to figure out what techniques work and what the effects of the techniques are.”

Dr. Shaffer says that he has seen campaigns like this before. In 2014 following the arrests of several police and government officials for the kidnapping and murder of 43 Mexican college students, the hashtag #YaMeCanse or “I am tired” became an online hub for protests.

But it wasn’t long before top search results for #YaMeCanse on Twitter became populated with tweets including the hashtag but no other meaningful content. Pro-government accounts were flooding the channels with automated posts, drowning the signal with noise.

Digital Potemkins

In 1787, after Russia annexed Crimea for what would be the first of many times, Catherine the Great toured her newly acquired territory. According to legend, the region’s governor, Prince Grigory Potemkin, sought to make the peninsula appear more prosperous than it actually was by constructing portable facades of settlements, which were disassembled and reassembled along Catherine’s route.

In the modern era, Potemkin villages are built out of code. Social bots, accounts that automatically can post content, like posts, follow and unfollow users, and send direct messages, don’t just promote bad hashtags, they can also greatly distort our perceptions of what beliefs and values are and are not popular.

“People tend to believe content with large numbers of retweets and shares,” says Dr. Varol. “This is unconsciously happening.”

A 2017 study led by Varol found that between 9 percent and 15 percent of all accounts on Twitter, up to 48 million accounts, may be bots.

The social media giant stepped up its war against false bandwagons last week, when it purged tens of millions of automated or idle accounts from its platform, reducing the user bases’ collective follower count by about 6 percent.

“I believe the situation is much better right now,” says Varol. “Twitter is taking proactive behavior.”

Others are calling for more regulation of automated accounts. In the California Assembly, a version of Senate Bill 1001, which would make it illegal for bots to mislead humans as to who they really are, has left the Senate floor and is being taken up by the Committee on Appropriations. If passed, such a law could have ripple effects beyond California.

But until bots are required to identify themselves, or social platforms eradicate them, humans will have to remain on the lookout for their influence. ”Slow down. Think carefully. Don’t retweet from your bed,” says Shaffer. “That can help.”